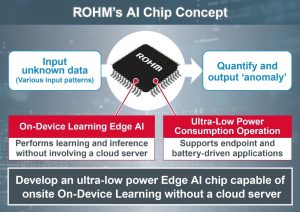

ROHM has developed an on-device learning AI chip (SoC with on-device learning AI accelerator) for edge computer endpoints in the IoT field. It utilizes artificial intelligence to predict failures (predictive failure detection) in electronic devices equipped with motors and sensors in real time with ultra-low power consumption.

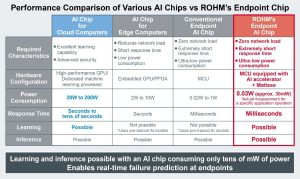

Generally, AI chips perform learning and inferences to achieve artificial intelligence functions. As learning requires that a large amount of data gets captured, compiled into a database, and updated as needed. So, the AI chip that performs learning requires substantial computing power that necessarily consumes a large amount of power. Until now it has been difficult to develop AI chips that can learn in the field consuming low power for edge computers and endpoints to build an efficient IoT ecosystem.

Generally, AI chips perform learning and inferences to achieve artificial intelligence functions. As learning requires that a large amount of data gets captured, compiled into a database, and updated as needed. So, the AI chip that performs learning requires substantial computing power that necessarily consumes a large amount of power. Until now it has been difficult to develop AI chips that can learn in the field consuming low power for edge computers and endpoints to build an efficient IoT ecosystem.

Based on an ‘on-device learning algorithm’ developed by Professor Matsutani of Keio University, ROHM’s newly developed AI chip mainly consists of an AI accelerator (AI-dedicated hardware circuit) and ROHM’s high-efficiency 8-bit CPU ‘tinyMicon MatisseCORE’. Combining the 20,000-gate ultra-compact AI accelerator with a high-performance CPU enables learning and inference with an ultra-low power consumption of just a few tens of mW (1000× smaller than conventional AI chips capable of learning). This allows real-time failure prediction in a wide range of applications, since ‘anomaly detection results (anomaly score)’ can be output numerically for unknown input data at the site where equipment is installed without involving a cloud server.

Going forward, ROHM plans to incorporate the AI accelerator used in this AI chip into various IC products for motors and sensors. Commercialization is scheduled to start in 2023, with mass production planned for 2024.

Professor Hiroki Matsutani, Dept. of Information and Computer Science, Keio University, Japan

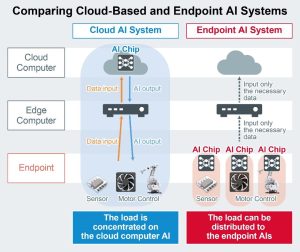

“As IoT technologies such as 5G communication and digital twins advance, cloud computing will be required to evolve, but processing all the data on cloud servers is not always the best solution in terms of load, cost, and power consumption. With the ‘on-device learning’ we research and the ‘on-device learning algorithms’ we developed, we aim to achieve more efficient data processing on the edge side to build a better IoT ecosystem. Through this collaboration, ROHM has shown us the path to commercialization in a cost-effective manner by further advancing on-device learning circuit technology. I expect the prototype AI chip to be incorporated into ROHM’s IC products in the near future.”

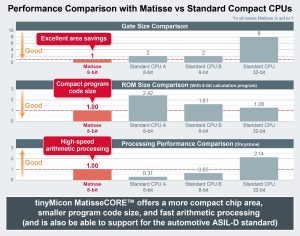

tinyMicon MatisseCORE

tinyMicon MatisseCORE is ROHM’s proprietary 8-bit CPU developed for the purpose of making analog ICs more intelligent for the IoT ecosystem. An instruction set optimized for embedded applications together with the latest compiler technology to deliver fast arithmetic processing in a smaller chip area and program code size. High-reliability applications are also supported, such as those requiring qualification under the ISO 26262 and ASIL-D vehicle functional safety standards, while the proprietary onboard ‘real-time debugging function’ prevents the debugging process from interfering with program operation, allowing debugging to be performed while the application is running.

tinyMicon MatisseCORE is ROHM’s proprietary 8-bit CPU developed for the purpose of making analog ICs more intelligent for the IoT ecosystem. An instruction set optimized for embedded applications together with the latest compiler technology to deliver fast arithmetic processing in a smaller chip area and program code size. High-reliability applications are also supported, such as those requiring qualification under the ISO 26262 and ASIL-D vehicle functional safety standards, while the proprietary onboard ‘real-time debugging function’ prevents the debugging process from interfering with program operation, allowing debugging to be performed while the application is running.

Detail of ROHM’s AI Chip (SoC with On-Device Learning AI Accelerator)

The prototype AI chip is based on an on-device learning algorithm (three-layer neural network AI circuit) developed by Professor Matsutani of Keio University. ROHM downsized the AI circuit from 5 million gates to just 20,000 (0.4% the size) to reconfigure for commercialization as a proprietary AI accelerator (AxlCORE-ODL) controlled by ROHM’s high-efficiency 8-bit CPU tinyMicon MatisseCORE that enables AI learning and inference with ultra-low power consumption of just a few tens of mW. This makes the numerical output of ‘anomaly detection results’ possible for unknown input data patterns (i.e. acceleration, current, brightness, voice) at the site where equipment is installed without involving a cloud server or requiring prior AI learning, allowing real-time failure prediction (detection of predictive failure signs) by onsite AI while keeping cloud server and communication costs low.

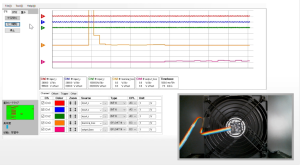

For evaluating the AI chip, an evaluation board is equipped with Arduino-compatible terminals that can be fitted with an expansion sensor board for connecting to an MCU (Arduino). Wireless communication modules (Wi-Fi and Bluetooth) along with 64kbit EEPROM memory are mounted on the board, and by connecting units such as sensors and attaching them to the target equipment, it will be possible to verify the effects of the AI chip from a display. This evaluation board will be loaned out from ROHM sales.

AIChip Demo Video

A demo video showing this AI chip used with the evaluation board is available. https://youtu.be/SVn5CKFX9Uo

A demo video showing this AI chip used with the evaluation board is available. https://youtu.be/SVn5CKFX9Uo

Terminology

Edge Computer / Endpoint

Servers and computers that form the basis of big data connected to the cloud are called cloud servers and cloud computers, whereas edge computers are computers and devices on the terminal side. Endpoints refer to devices/points at the end of edge computers.

AI Accelerator

A device that improves the processing speed of AI functions by utilizing hardware circuits instead of software by CPUs.

Digital Twin

A technology that expresses and reproduces information in the real world but in a virtual (digital) space, like a twin.

Three-Layer Neural Network

A neural network (a model of mathematical formulas and functions) inspired by the human brain with a processing simple three layers comprised of input, intermediate, and output layers. Deep learning involves adding dozens of intermediate layers to achieve more complex AI processing.

Arduino

A popular worldwide open-source platform supplied by Arduino consisting of a software development environment and board equipped with input/output ports and an MCU.